Windows Phone 7: UDP performance

Published on Thursday, September 22, 2011 9:34:00 AM UTC in

Programming

***Update 2011-11-17: *A recent update for HTC phones is confirmed to fix the issue for the Trophy (firmware 2250.21.51002.161), Mozart (firmware 2250.21.51007.401) and HD7. The last remaining phone model affected (that I know of) is the Dell Venue Pro.

***Update 2011-10-01: *A recent firmware update from Samsung fixes this bug for the Omnia 7! Read more on the topic here.

Update 2011-09-30: This article is compatible with the final version of Windows Phone 7.1 "Mango". All the findings here are (unfortunately) confirmed using the RTW version of the developer tools as well as real phone devices updated to the final version of Windows Phone OS 7.5.

UDP is a popular protocol in networking when you want to establish simple communication that does not require all packets to be reliably transmitted. The fact that with UDP (unlike with TCP) all packets are transferred individually somewhat simplifies the application level protocol and algorithms required to parse the data on the receiving end (you can find a post of mine about TCP on the phone with sample code here). And finally, UDP is perfect for time-sensitive applications that want to have a pseudo real-time communication without the need to wait for dropped or delayed packets to arrive or be resend. With the Mango release, UDP support was added to Windows Phone 7. Unfortunately, some of the fundamental benefits of UDP apparently are broken by the current implementation.

Setting up a sample

To test UDP, I set up a simple sample in the following way: on the client side, a new thread is spawned that handles all the communication. In particular, it makes use of the Socket and SocketAsyncEventArgs classes to set up the required parameters like the remote endpoint to send to, and to handle errors and notify about successful send operations.

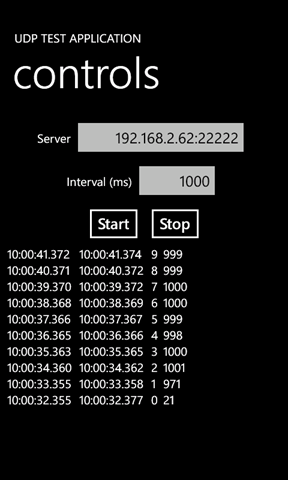

The simple client UI allows to start and stop the transmission of data, as well as to specify the target address and the interval data should be send at (in milliseconds). Both the start time and end time of the transmission are recorded; the transferred data simply is a counter that has its value increased with each transferred packet, and the elapsed time on the phone since the last packet was sent. The counter makes it easy to spot lost packets during the tests.

This is a screenshot from the emulator. Here is how to read the data:

10:00:41.372 10:00:41.374 9 999

10:00:40.371 10:00:40.372 8 999

Each line represents a sent packet. The first part is the starting time, taken before the "SentToAsync" method of the socket is called. The second time is the time at which the "Completed" event was raised to signal successful sending (if no error occurred). Please note that this is not a confirmation that the packet has been received by the other end - there is no such mechanism in UDP. Then follows the packet number, and the last part is the elapsed time since the last packet was sent, as an indicator how good the desired interval is adhered to.

So for the above screenshot and sample numbers, the results are: the interval between packets was set to 1,000 milliseconds. The top most sample line (packet 9) shows that it took two milliseconds from invoking the SendToAsync method to receive the Completed event. Sending the packet was started 999 milliseconds after the last one had been sent, which is close enough to the desired interval for our tests. You always should ignore the first few packets in the report, as the reference time value is missing at first.

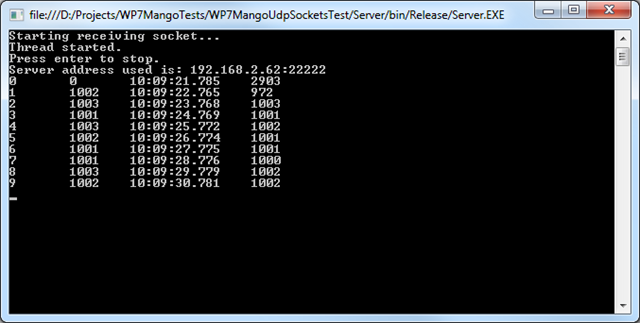

The server side

At the other end, a very simple .NET console application sits and receives the packets. Again all communication is done on a separate thread. In addition to the data transferred from the phone, a time stamp generated at receive time is printed to the console, as well as the elapsed time in milliseconds since the last packet was received. That last value also should be close to the desired interval in optimal situations.

As you can see, the elapsed times reported on the phone between sent messages are very similar to the ones we experience on the PC when we receive them. Of course, looking at numbers in the emulator is not very conclusive, so let's move on and use a real device for testing.

Testing on real hardware

Note: First of all I want to emphasize that I've run all the tests with the sample compiled in release mode, and the device disconnected from the PC as well as cleanly rebooted, to avoid any side effects from debugging, debug builds or previously run applications. The following data is taken from a Samsung Omnia 7.

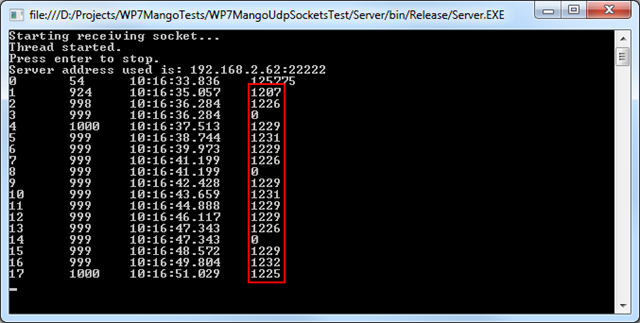

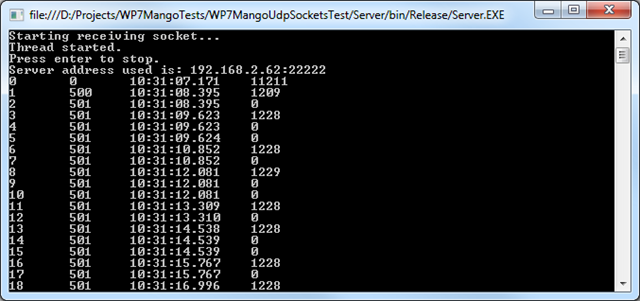

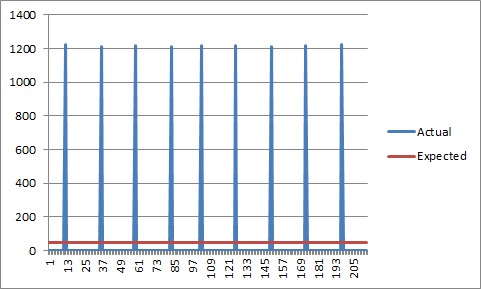

When you run the above sample on a real device, at first you will see a positive surprise compared to the emulator. Where sending the packets took one to two milliseconds there, it seems to consistently require only one millisecond on the device, which is a slight improvement. On second glance however, something rather odd becomes apparent. This is what is reported at the server side now:

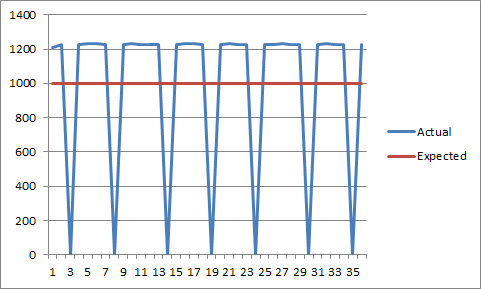

Huh. On the sending side - which is the phone of course - the elapsed time between the individually sent packets still is accurately reported as roughly 1,000 milliseconds. On the receiving end however, what we experience is that the elapsed times are significantly higher (~20+%). To make up for this delay, after some messages two packets seem to arrive at the same time, so on average the same elapsed time between packages is achieved.

More analysis

Like I said, UDP is interesting for pseudo real-time communication, for example in multiplayer games, where you want to keep the latency ("lag") as low as possible. For scenarios like this, updating data once a second really is a too long interval, not suitable for fast-paced games at all. Usually you want to target much smaller intervals. Let's see what happens then.

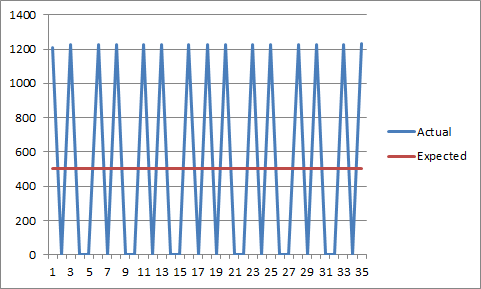

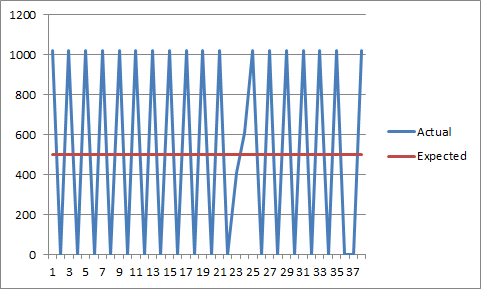

Here is the data for a target interval of 500 milliseconds:

As you can see, the device still reports it's sending accurately at 500 millisecond intervals, but on the receiving end, again we see the oscillation between ~1200 and 0 milliseconds, just like before.

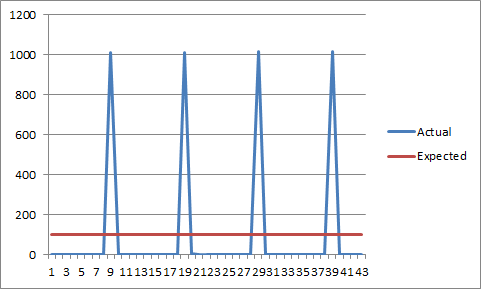

Now with a target interval of 100 milliseconds:

I think you can already guess what the data for even lower intervals looks like:

Consequences and solutions

The most dramatic insight taken from the above findings is that the current behavior is far from being usable for scenarios like multiplayer gaming, at least for the majority of games. Introducing a latency of > 1.2 seconds is bad already. The fact that the messages are apparently sent and then received in bursts in such a dramatic way is even worse.

I wasn't able to force a different behavior of the phone when sending data, not even by using nasty workarounds like adding dummy data to the datagrams to artificially increase the message size. Of course I cannot eliminate the possibility that I'm missing something fundamental here, or that I'm doing something wrong. However, given the facts that I'm pretty much reusing the available sample code available for socket programming on the phone from MSDN, and that I have years of experience with network programming, in particular UDP sockets on mobile devices and devices running e.g. Windows CE, make me believe that this is indeed a flaw of the current implementation on the phone. It may not even be a real bug; I can imagine that this is caused by some optimization of the phone OS for network transmission. However, in cases like these, it's really counterproductive to take away the control from the developer (if I'm willing to go as low level as using sockets, I want to have full control, right).

At the moment, I don't know a complete solution for the problem. You can smooth the data on the receiving end, for example by transmitting a timestamp, and then report the received messages dependent on these timestamps to make them equidistant again (or respect the time distance they were sent at, respectively). This of course introduces even more latency, so this is only interesting if you want a smooth data stream (e.g. for animations/moving objects by remote commands) and can live with the huge lag. For real-time communication, this is unacceptable.

As the note in the beginning of this article says, these tests are performed with a pre-release version of "Mango", so I still have hope that this will be fixed in the final release. Also, feel free to do your own tests with your devices and/or point me to mistakes I have made, or to further resources how this problem could be fixed or what causes it. At the moment, there's really not much material and discussion on this problem to find (here's a post of someone on SO who has similar problems).

Link to the Connect bug entry created for this

Appendix: Data from HTC Mozart

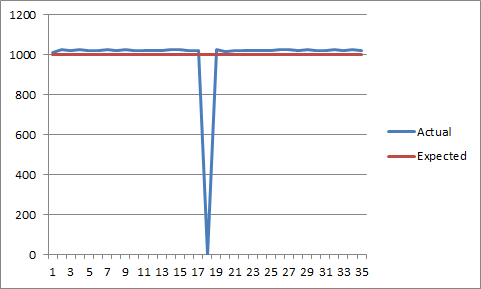

I was now able to run the same application on an HTC Mozart to see whether this is an issue that only affects the Samsung Omnia 7 I have used above. Here is the results from using 1,000 milliseconds as interval:

As you can see, this first data indeed seems much better than the one from the Omnia 7. The HTC seems to adhere better to the desired interval. But we still see these occasional undesired spikes where messages seem to arrive at the same time to make up for the slightly longer actual intervals and the sum of deviation that piles up.

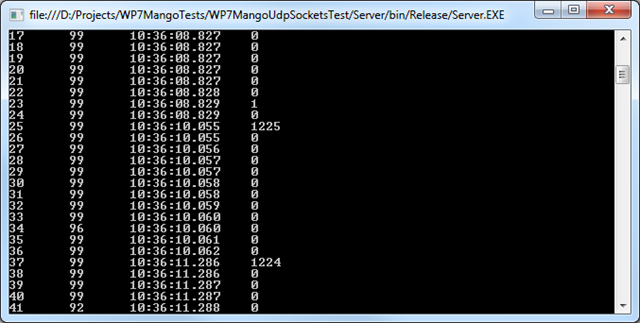

Unfortunately, things only looked better by coincidence. In reality, the situation is just as worse as with the Omnia 7, which becomes clear when we look at other data. Here is the result for 500 milliseconds:

This seems to lead to the conclusion that the HTC shows the same behavior as the Samsung, but the "magic number" for the actual interval seems different. Where the Samsung showed constant values of ~1,230 milliseconds, the HTC uses ~1,020. This explains why the data didn't look as bad for a desired interval of 1,000 milliseconds, apart from the occasional spikes.

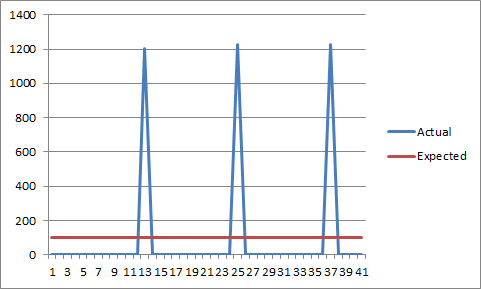

This is confirmed by the final data, which shows the results for 100 milliseconds:

So it is not an issue specific to a certain brand or model, but shows in similar ways on other devices too. Again, if you want to point out improvements for the code, or provide data from additional phones, please contact me.

Tags: Mango · Networking · Sockets · UDP · Windows Phone 7